The Gray Market: How Christie’s So-Called ‘AI-Generated’ Art Sale Proves That Records Can Distort History (and Other Insights)

By Tim Schneider, Art Business Reporter.

Our columnist on the hidden dangers and distortions created by the all-consuming hype around Christie’s partnership with Obvious.

Every Monday morning, artnet News brings you The Gray Market. The column decodes important stories from the previous week—and offers unparalleled insight into the inner workings of the art industry in the process.

For this edition, three thoughts on the most dumbfounding story of the week…

SHORT CIRCUIT

On Thursday, a gilt-framed print on canvas by the algorithm-wielding French trio Obvious sold for $432,500—more than 4,320 percent its high estimate of $10,000, once the buyer’s premium was included. And while the sale brings up several issues worth our attention, the most important to me is what it shows about the art market’s ability to mold the historical narrative to its liking.

For roughly the past two months, Christie’s has loudly broadcast to the traditional art world that this work, titled Portrait of Edmond de Belamy, is “the first piece of AI-generated art to come to auction.” However, that claim is dubious in two important ways, both of which rely on our willingness to accept Christie’s marketing at face value.

First, as Naomi Rea and I wrote last month, the portrayal of this work as “AI-generated” (or similar) is crude at best. It conflates humans feeding labeled examples into what began as an open-source algorithm with the grand concepts of developing artificial general intelligence or superintelligence, in which machines become sentient and goal-directed without human guidance.

There is a robust debate raging right now about how much credit Obvious should be able to claim for Portrait of Edmond de Belamy—and not just in terms of what is, in my opinion, the substantially misleading gesture to list the algorithm as the print’s “artist.” Since Naomi will have more to report about that angle soon, I’ll just make the point that even understanding what happened requires unpacking a wave of technical jargon unfamiliar to most of the population, both inside and outside the traditional art world (including us at artnet News, who have been imperfect so far). And the learning curve opens up potential for distortion, whether knowing or unknowing.

Based on what I’ve seen, I tend to believe that Christie’s itself falls into the “unknowing” category. Head of prints and multiples Richard Lloyd, who consigned the portrait from its makers, admitted to The Art Newspaper that he “only learned of Obvious when he read ‘an article on artnet about a collector who had bought one of their works’ earlier this year.” To me, this is about as reactionary as looking up from a fortune cookie promising true love and proposing to the first person you see in Panda Express, and it speaks volumes about how superficially the high end of the market is engaging with art and machine learning right now.

But tech-world terminology isn’t the only miscommunication here. There is at least as much slanting happening when it comes to the side of this story recognizable to practically everyone in the traditional art world: the notion of being “first to come to auction.”

A Vincent van Gogh-inspired Google Deep Dream painting. Image courtesy of Google.

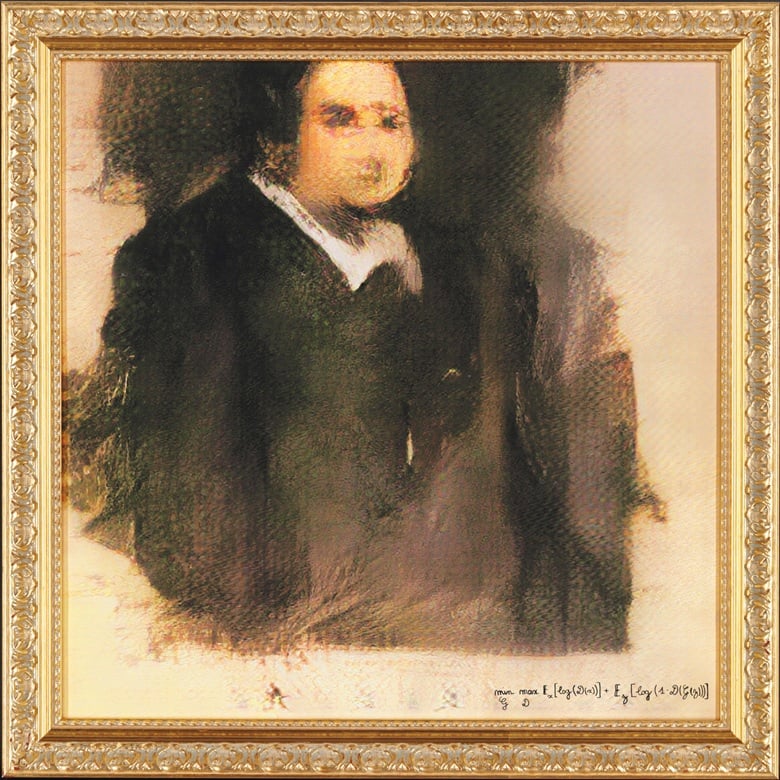

WHO HOLDS THE PEN?

In February 2016, San Francisco’s Gray Area Foundation for the Arts raised $98,000 in a benefit auction of works by 10 artists produced using DeepDream, Google’s open-source neural network. To the uninitiated, a neural network is the same type of software used by Obvious to create Portrait of Edmond de Belamy. So if we’re going to consider the French trio’s work “AI-generated,” then Gray Area technically beat Christie’s to the auction block for this genre by more than two and a half years.

But when I raised this point with multiple people in the art business, they all responded with some variation of this thought: “Well, that was just a nonprofit benefit, not a sale at one of the big houses.”

This reply reveals a tacit assumption that has wormed its way into the art-world consciousness: that “coming to auction” no longer refers to a general mode of public sale. Instead, it refers to a mode of public sale carried out by a market-leading gatekeeper.

More importantly, why has this become the working definition of “coming to auction”? Because, by and large, we’ve tacitly come to accept that market-leading gatekeepers are the sources that matter most in charting the history of the art market. And as the market becomes an increasingly powerful force in shaping public understanding, they also become (like it or not) the sources that matter most in charting the history of art itself.

This phenomenon isn’t exclusive to the arts. If you’ll allow me a sports analogy, Major League Baseball, the US professional league for the sport, has called its championship round the World Series since 1903. Why has global success been defined by MLB standards for 115 years? Because it has consistently been the most visible, most profitable, and most prestigious baseball league on the planet. In sports as in art as in so much else, might makes right.

Is much of the traditional art world now on track to overlook important machine-learning artists the way it overlooked so many important non-white, non-male artists in the past? Kerry James Marshall, Untitled (Painter) (2009). Courtesy of the Museum of Contemporary Art Chicago. Photo: Nathan Keay, © MCA Chicago.

MAKING HISTORY, OR REPEATING IT?

Now, in fairness, Christie’s has been more circumspect about terms in some press materials than others—for instance, by calling itself (emphasis mine) the “first auction house” to offer a work like Portrait of Edmond de Belamy, or by describing the print as “the first AI-generated portrait to come to auction.”

Yet seeing and appreciating fine distinctions like these demands legalistic attention to detail. They might matter if everyone in the art world also properly pluralized states’ top law minds as “attorneys general” or used the Chicago Manual of Style to format their Instagram captions. But we don’t.

So what the art media and the art industry communicate is the original, grandiose narrative—the one that casts Christie’s and Obvious as the path-breakers in a field where less visible artists have been pioneering for years.

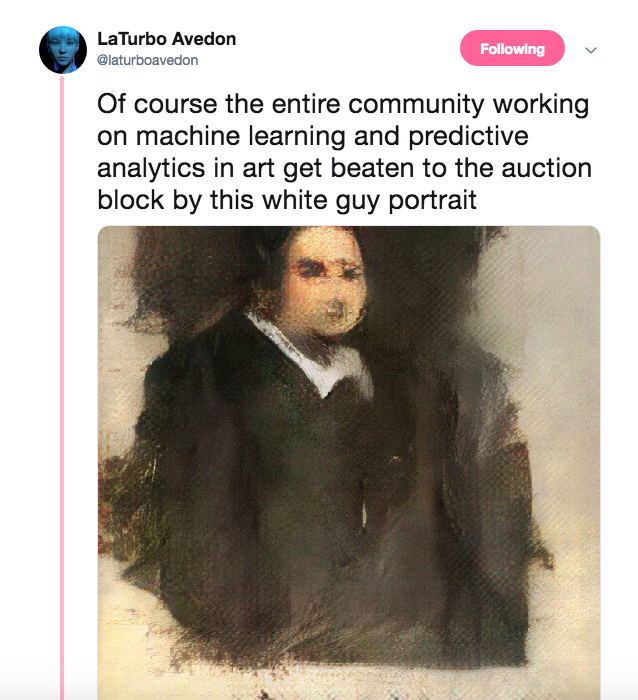

LaTurbo Avedon, an artist whose practice delves into authorship in virtual space and other issues, tweeted an all-too-telling thought minutes after the Obvious sale:

We in the art world are now in the midst of a great reawakening to artists and practices unfairly slept-on during the arc of the 20th century: women artists, LGBTQI artists, artists of African or Latinx descent, artists outside the western canon. Seemingly every week, someone in the traditional art world makes news with some action intended to correct what we now universally acknowledge as heinous oversights by the institutional and commercial sectors alike.

Together, the Obvious sale and the rhetoric around it reveal something hugely distressing: Even as we in the traditional art world try to make amends for these mistakes, many of us are making the same kind of mistake again right now. Only this time, as Avedon’s tweet suggests, we’re doing it with artists working in new media and emerging technologies.

To be clear, I am not arguing that failing to acknowledge art-tech pioneers is as morally reprehensible as discriminating against people for inherent characteristics of their humanity. But I am arguing that ignorance of, or strictly superficial engagement with, technology is creating similar blind spots in art as the same behaviors toward gender, sexuality, ethnicity, and geography did in the past.

By and large, we in the traditional art world are not doing the research. We are not engaging earnestly with unfamiliar communities. We are not questioning the story being blasted out to us by the people with the loudest, most expensive megaphones.

But it’s still early. The mainstream narrative on art and machine learning has not settled yet. We can still correct the distortions.

However, if we don’t make that effort, it will be proof positive of how little we’ve really learned—not about machines but about each other, and ourselves. And perhaps worst of all, it will poise us to repeat the same historical error yet again somewhere else. That is, if we’re not doing it already.

That’s all for this week. ‘Til next time, remember: There may be a lot of work to do on artificial intelligence, but the same can be said for natural intelligence, too.

https://www.facebook.com/artnet